Menu

NVMe over Fabrics (NVMe-oF) is often introduced as a faster way to access storage. That explanation is technically correct, but it misses the real point. NVMe-oF is not simply about improving speed it is about changing where performance can exist within an infrastructure.

For years, organisations have had to choose between performance and flexibility. Direct-attached NVMe delivers exceptional speed, but it isolates storage inside individual servers. Traditional networked storage centralises capacity, but introduces latency and protocol overhead. NVMe-oF exists to close that gap.

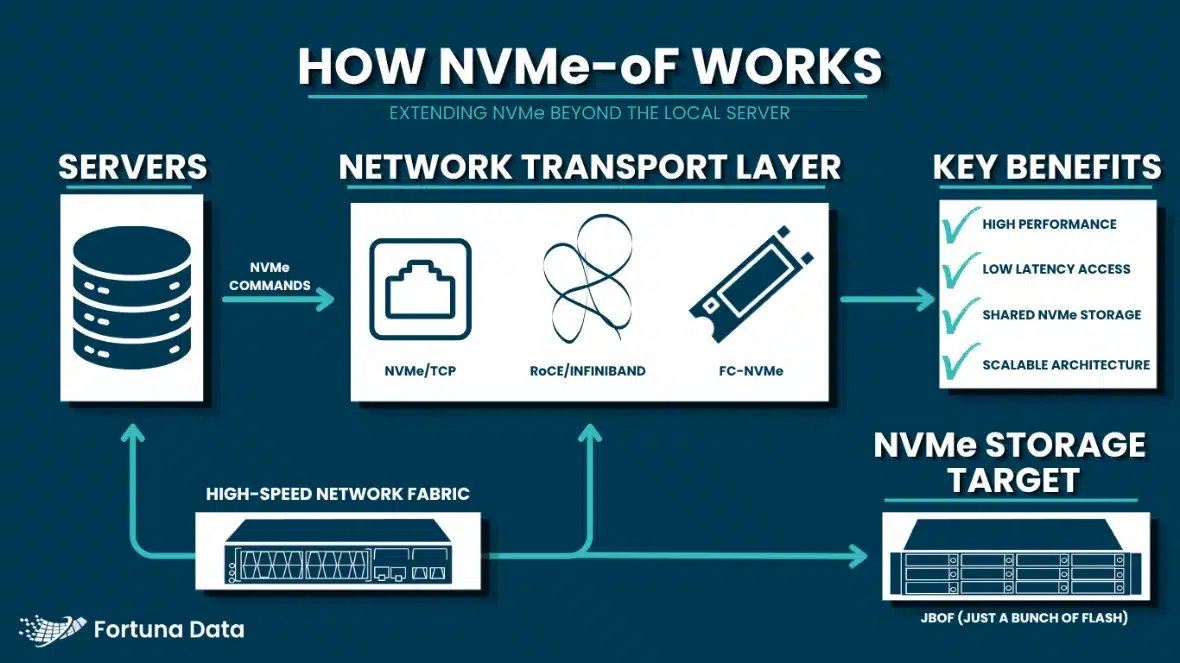

At its core, NVMe-oF extends the NVMe protocol beyond the local server. Instead of communicating with a drive over the PCIe bus, a host can access NVMe storage across a network while still using the same highly efficient command structure. This is why the term “Fabrics” is important. NVMe-oF is not tied to a single transport. It can operate across Ethernet, Fibre Channel, and RDMA-based networks, including implementations such as NVMe over Fibre Channel. Fibre Channel is simply one pathway, not the definition.

This transport flexibility is what has made NVMe-oF viable in enterprise environments. It allows organisations to adopt it within existing architectures rather than forcing a complete redesign. An organisation with a mature Fibre Channel SAN can evolve towards NVMe without abandoning its current fabric, while those operating on Ethernet can introduce NVMe/TCP without investing in entirely new networking stacks. The protocol adapts to the infrastructure strategy, not the other way around.

The reason NVMe-oF has become relevant now is largely due to the limitations of legacy storage protocols. Technologies such as iSCSI and traditional Fibre Channel were designed in an era dominated by spinning disks. Their overhead was negligible when latency was measured in milliseconds. With NVMe flash operating at microsecond speeds, those same protocol layers now represent a meaningful bottleneck. They add latency, consume CPU resources, and ultimately dilute the performance gains that NVMe is capable of delivering.

NVMe-oF removes much of that inefficiency. It is designed to preserve the parallelism and low-latency characteristics of NVMe, even when storage is accessed over a network. The result is that performance is no longer confined to the server boundary. Storage can be shared, pooled, and scaled without introducing the same level of compromise that older protocols impose.

However, this does not mean NVMe-oF is universally required. Its value is highly dependent on the workload and the broader infrastructure strategy. In environments where latency directly impacts outcomes such as AI pipelines, high-performance computing, or real-time analytics the ability to access remote storage with near-local performance is significant. In these cases, NVMe-oF enables architectures that would otherwise be impractical, allowing compute and storage to scale independently without sacrificing responsiveness.

In contrast, many general enterprise workloads will see limited benefit. File services, backup environments, and standard virtualisation stacks often do not operate at a level where microsecond latency differences materially affect performance. In these scenarios, the additional complexity of NVMe-oF may outweigh its advantages. The technology is powerful, but it is not a blanket replacement for existing storage approaches.

The choice of transport also plays a critical role in how NVMe-oF performs in practice. Ethernet-based NVMe/TCP has gained traction because it offers accessibility and simplicity, running on standard network infrastructure with minimal disruption. RDMA-based implementations, such as RoCE or InfiniBand, deliver the lowest latency and highest throughput, but require more specialised networking capabilities. Fibre Channel provides a middle ground for organisations already invested in SAN environments, offering a familiar operational model while introducing NVMe efficiencies.

What becomes clear is that NVMe-oF is less about the protocol itself and more about what it enables. It supports a shift towards disaggregated infrastructure, where storage is no longer statically tied to individual servers but exists as a shared, high-performance resource. This aligns closely with how modern data centres are evolving—towards greater flexibility, higher utilisation, and more dynamic allocation of resources.

From a commercial perspective, adoption is measured rather than disruptive. Most organisations are not replacing their entire storage estate with NVMe-oF. Instead, they are introducing it selectively, targeting the workloads and environments where its benefits are clear. This pragmatic approach reflects the reality of enterprise infrastructure decisions, where stability and return on investment are just as important as performance gains.

Ultimately, NVMe-oF should not be viewed as a universal upgrade, but as a strategic tool. When aligned to the right use case, it allows organisations to extend the full value of NVMe beyond the server, enabling architectures that are both high-performing and scalable. When misapplied, it risks becoming an unnecessary layer of complexity.

The difference lies in understanding not just what NVMe-oF is, but where it fits.

NVMe-oF becomes significantly more powerful when combined with JBOF architecture.

On its own, NVMe-oF enables high-performance access to remote NVMe storage. When paired with JBOF, it becomes the transport layer that allows disaggregated flash to function as a shared, scalable resource.

JBOF provides the raw NVMe capacity—high-density flash without the constraints of traditional storage controllers. NVMe-oF then allows that capacity to be accessed across the network with minimal latency overhead. The result is an architecture where storage is no longer tied to a single system, but instead becomes a pooled resource that can be dynamically consumed by multiple workloads.

This combination is particularly relevant in environments where performance and flexibility must coexist. Rather than scaling storage and compute together, organisations can scale each independently, improving utilisation and reducing long-term infrastructure inefficiencies.

In practical terms, NVMe-oF is what makes JBOF viable beyond a single enclosure. Without it, JBOF would remain isolated. With it, JBOF becomes part of a broader, high-performance storage fabric.

Understanding NVMe-oF is one thing. Implementing it correctly within an existing environment is another.

This is where Fortuna Data operates differently.

NVMe-oF and JBOF are not standalone decisions—they sit within a wider architecture that includes compute, networking, data protection, and long-term scalability. The wrong design can introduce unnecessary complexity without delivering meaningful gains.

Fortuna Data works with organisations to:

The focus is not on pushing a specific technology, but on ensuring that when NVMe-oF is deployed, it is done for the right reasons—and delivers against them.

If you’re exploring NVMe-oF, the starting point is not the protocol. It’s the architecture around it.