Menu

JBOF Just a Bunch of Flash is one of the more misunderstood components in modern storage architecture. It is often compared directly to traditional storage arrays, but that comparison misses the point. JBOF is not designed to replace an array in the conventional sense. It is designed to remove the constraints that arrays introduce.

At a time when NVMe has dramatically increased the performance potential of flash, the bottleneck has shifted away from the media itself and towards how that media is controlled, accessed, and scaled. JBOF addresses that shift directly.

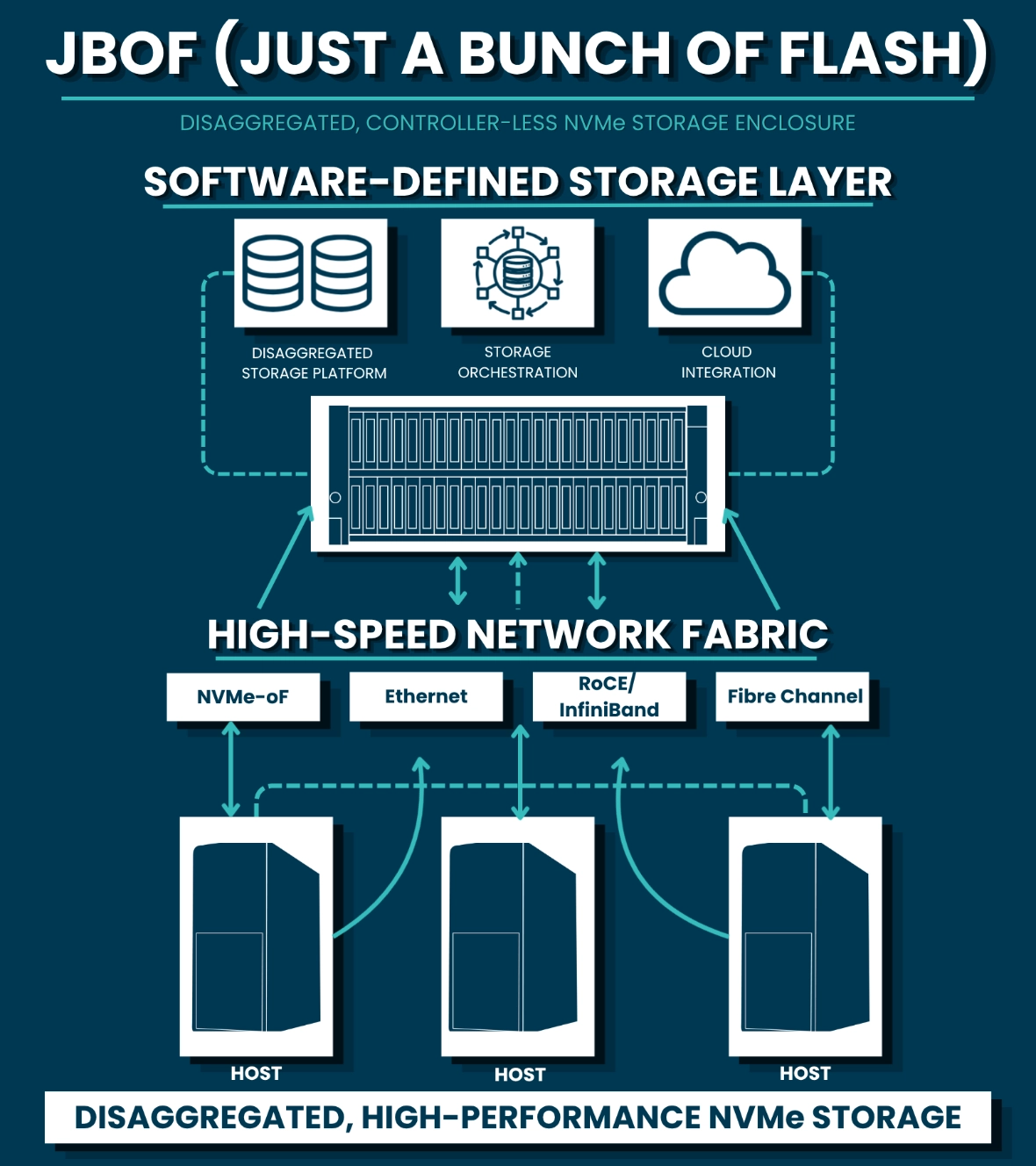

A JBOF is a flash enclosure filled with NVMe SSDs, exposed over a high-speed fabric, but without the traditional storage controller layer found in standard arrays.

Instead of embedding intelligence inside the storage system, JBOF externalises it. The drives are presented across the network typically via NVMe over Fabrics (NVMe-oF) and the intelligence sits elsewhere in the stack. This could be:

The key principle is simple: separate storage media from storage control.

Traditional storage arrays were built for a different era. They combine compute, memory, networking, and storage media into a single system. While this simplifies management, it also creates limitations.

As flash performance has increased, those limitations have become more visible. Controllers can become bottlenecks. Scaling often requires adding more of everything compute, cache, and capacity even when only one resource is needed.

JBOF changes that model.

By stripping away the controller layer, it allows organisations to:

This aligns closely with modern infrastructure design, where flexibility and efficiency are prioritised over tightly coupled systems.

In a JBOF architecture, the enclosure itself is relatively simple. It provides power, cooling, and high-speed connectivity for NVMe drives. The drives are then made accessible across a network fabric using NVMe-oF.

From the perspective of a host or application, those drives can appear as remote NVMe devices, accessed with minimal latency overhead compared to local storage.

The intelligence data protection, replication, snapshots, and orchestration is handled outside the JBOF. This is typically delivered through software-defined storage platforms or container-native storage systems.

The result is an architecture where:

JBOF is not a general-purpose solution. Its value becomes clear in environments where performance, scalability, and flexibility are tightly linked.

In high-performance environments such as AI, machine learning, and HPC, JBOF enables access to large pools of NVMe storage without forcing data locality. Compute resources can scale independently, accessing shared flash with near-local performance characteristics.

It also plays a role in disaggregated infrastructure strategies. As organisations move towards composable architectures, separating storage from compute becomes essential. JBOF provides the physical layer that makes this separation viable without sacrificing performance.

In software-defined storage environments, JBOF allows platforms to operate without being constrained by traditional array hardware. The software layer can control how data is distributed, protected, and accessed, while the underlying flash remains flexible and scalable.

Rethinking How High-Performance Storage Is Delivered

Despite its advantages, JBOF is not a universal replacement for traditional storage systems.

In many enterprise environments, the integrated nature of storage arrays remains valuable. Built-in data services, simplified management, and predictable support models reduce operational complexity.

JBOF introduces a different set of requirements. It assumes that the organisation has:

Without these, the benefits of JBOF can be difficult to realise.

The fundamental difference between JBOF and traditional arrays is where intelligence resides.

In a traditional array, the system itself is responsible for:

In a JBOF architecture, those responsibilities move into software.

This shift is significant. It enables greater flexibility and scalability, but it also places more emphasis on the surrounding ecosystem. The storage system is no longer a self-contained solution it becomes part of a broader architecture.

JBOF should be viewed as an enabler rather than a standalone solution. It provides the foundation for architectures that prioritise:

It is particularly relevant in environments where infrastructure is being redesigned around modern workloads, rather than adapted from legacy systems.

JBOF is not about adding more flash. It is about removing the constraints around how flash is used.

For organisations pushing the limits of performance and scalability, it offers a way to decouple storage from traditional hardware boundaries and build more flexible, efficient systems.

For others, it may introduce unnecessary complexity.

As with NVMe-oF, the value of JBOF is not universal. It depends entirely on how—and why—it is deployed.

JBOF does not operate in isolation. Its value is unlocked through NVMe over Fabrics.

A JBOF enclosure provides high-density NVMe flash, but without a transport layer, that performance remains localised. NVMe-oF enables that flash to be accessed across a network while maintaining the low-latency characteristics that make NVMe valuable in the first place.

This relationship is fundamental.

NVMe-oF allows JBOF to move beyond being a simple enclosure and become part of a shared, high-performance storage architecture. It enables multiple compute nodes to access the same pool of flash, supports disaggregated infrastructure models, and ensures that performance is not lost when storage is centralised.

Without NVMe-oF, JBOF is capacity. With it, JBOF becomes infrastructure.

JBOF is often discussed as a hardware decision. In reality, it is an architectural one.

Deploying JBOF introduces new considerations around data services, orchestration, networking, and workload alignment. Without the right design, the benefits of disaggregation can quickly be offset by complexity.

Fortuna Data approaches JBOF differently. The focus is not on the enclosure itself, but on how it integrates into a complete, high-performance environment.

This includes:

The outcome is not just access to flash, but a storage architecture that is aligned to how the business actually consumes and scales data.