Data Backup Strategy

Complexities of Backup

Performing a data backup of a server physical or virtual and the associated software applications should be easy, 9 times out of 10 there are problems. Enterprise customers for years have relied on industry leaders such as Commvault Software, NAKIVO, Veritas Technologies, Retrospect, VEEAM Software, Dell Technologies, IBM to provide a complex and diverse backup solution that ensures data is backed up and secured.

Any large-scale business today has multiple levels of data protection in place to stop unauthorised access to the network, anti-virus, malware scanners, firewalls etc. The list is endless, and these products are kept up to date with patches and updates, even replaced on a regular basis. I bet the same isn’t true of the business backup software, to be honest it’s probably doing an okay job, but it isn’t great.

The reason, the restore of a data backup could be required in 5 or 10 years and without the backup application that wrote it, the data is lost forever. Humans like to feel comfortable and we don’t like change to make them feel uncomfortable, despite the fact our backups are okay, we really don’t want the hassle of thinking how we are going to bring back that data from 5-10 years ago. Ideally restore that legacy data and put it in the cloud, after “X” years delete the lot and put the money towards infrastructure upgrades.

Environmental Concerns

How many times a week does a van turn up to collect our backup data to store offsite, adding to our CO² footprint? Are businesses still doing this today, of course they are, remembered we don’t like change. Sometimes I want to shout out “wake up people, get out of the comfort zone”, talk to a business that understands all types of data.

Technology Advancements

The problem is many of these backup applications were written when the only thing that needed to be backed up were servers and a few software applications. Within the past 10 years there has been a massive advancement of computing applications, processing power and storage density increases. The backup software gradually became more monolithic:

- What to backup? – Files, Folders, Drives, Applications, VM’s

- Where to back it up? – Local, remote, disk, tape or cloud

- When to back it up? – Hourly, daily, weekly, monthly

- Why do we need to back it up? – Compliance, governance, security, business process

The complexities just keep coming :

- Backup to tape

- Backup to disk

- Backup to disk then tape

- Disk-2-Disk backup

- Centralise backup

- Centralise backup then move to the cloud

- Centralise backup and replicate to DR

- Backup to Cloud

Nowadays we must contend with :

- GDPR

- Data Encryption at rest

- Data Deduplication

- Data Encryption when replicating

- Where does my cloud provide store data at rest?

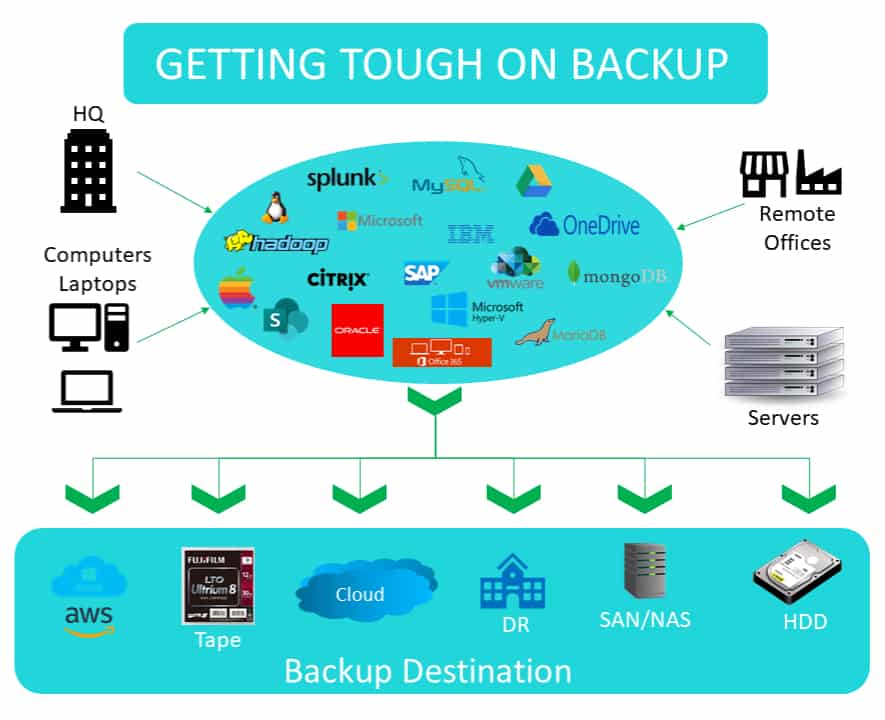

Today backup doesn’t just cover servers residing in the data centre, it needs to provide data protection for additional computers within the business including:

- Desktops

- Workstations

- Laptops/Notebooks

- Edge Computing

- Storage systems

- Remote sites or offices

Data Backup tasks

Today performing a nightly or weekly backup of a system or application isn’t enough. Businesses are looking for CDP (continuous data protection) and near instantaneous recovery of applications and systems in the event of data loss or failure. The data backup solution must be able to scale, perform, be easy to use and meet the needs of a business by backing up a multitude of applications and systems.

Data today is stored and accessible in a huge variety of ways, it is this complexity that makes backup more difficult to find a solution that can simply and easily backup from a diverse range of platforms. Most large businesses today do not rely on a single vendor for backup and this adds to the cost and complexity.

What will happen in 5 years?

Humans like to generate and share data, by 2020 44,000,000,000,000,000,000,000 bytes of data will be generated annually or quite simply 44 ZB (Zettabytes) and it’s increasing, by 2025 IDC estimates we will be creating 463 EB (Exabytes) of data daily or 168 ZB annually, this is 4x the increase over 2020 estimates. Clearly a data backup solution needs to be able to evolve, scale and adapt to meeting these requirements.

Where is all this data coming from?

More people having access to computers, mobile devices, drones, IoT and above all technology to enable higher resolution images, software to analyse complex data sets, messaging apps, smart technologies – phones, fridges, washing machines, TV’s … the list and possibilities are endless.

The AMD EPYC 7002 Series Processors set a new standard for the modern data centre with 64-cores, 8.34 Billion transistors on a 7 nm die, these are being used to analyse and process information, just 5 years ago an Intel Xeon E5 had 18 cores and 5.5 Billion transistors on a 22nm die. Things are getting smaller and faster, hard disk capacities 5 years ago were at 4TB, today 18TB drives exist from Seagate, it is predicted by 2025 hard disk capacities will be 100TB!

Take action?

What can we do, sit back and do nothing and wonder why we aren’t providing data protection!

Most businesses change their operating systems more than their backup software.

Every 3 years a company should be looking at their data backup strategy and asking is it good enough, scrap that, is it the best we can afford? Would you buy a 5-year-old disk drive or do we want the latest NVMe SSD drives? Backup software is no different; if we are not providing data protection, don’t cry when you’re fired for losing data. Just because “we’ve always done it like this”, doesn’t mean we can’t change.

When I started my business 29 years ago, backup software was hideously complex to configure, was a nightmare to install, load backup agents, create backup schedules and sort out tape rotations. Believe it or not some companies are still doing this in 2022/2023!

Network Performance

The data many businesses will be generating over the next 5 years is certainly going to grow. That data needs to be stored protected and backed up. Taking steps and planning for what is going to happen now will be easier than having to deal with a mountainous issue later rather than the mole hill today.

Backing up 50TB today could easily be 250TB’s in 6 years. How much data can be sent over 32Gb/s FC network using four ports in 12 hours? 1Gb/s = 125,000,000 bytes x 32 x 4 = 16,000,000,000 bytes per second or 0.014552 terabytes per second, 52.3872 terabytes per hour = 628.64 terabytes over 12 hours. It is going to take nearly 6 hours to backup 250TB’s of data.

Remember upgrading the data backup solution is also going to involve a network infrastructure upgrade. These figures are based on a LAN, if we choose to put our data in the cloud and we have a 1Gb/s WAN connection turn the lights off and come back in 24.8 days! Both these figures assume network utilisation.

Continuous Data Protection

Any business today needs to think about running at least CDP (continuous data protection). There is no way in the UK our WAN link speeds are going to increase 5-10x by 2025, therefore a petascale business needs to start thinking how it can backup and store data locally in the data centre and not the cloud. Remember data backup should be a guarantee that you can get your data back in a timely manner. Restoring a file from the cloud is different to restoring 10TB’s of data residing in the cloud from the backup of a failed server.